So I’ve been tinkering again.

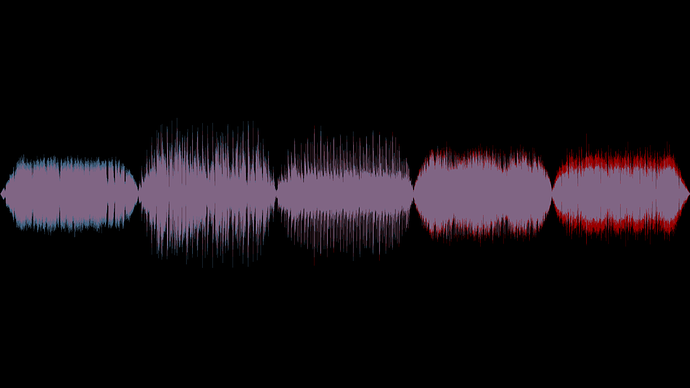

I’m chasing that unicorn of being able to balance really old songs and new songs with vastly different audio profiles.

@Ag_U, your talk of the 80 Hz somewhat sparked the line of thinking that I’m working on. So, I’m adding more to the Audacity data calculation, turning down some weight on the DNR (since there’s already a lot of weight going on in the frequency stuff that the DNR conceptually assumes…so we’re avoiding a slight double-cover issue here). Basically, I’ve taken to checking separate sections of the frequency range and what their dB average for that range is and then combining those factors together to produce a value.

This allows for a song with a lot of low end to be brought down for that low end, where a song with less low end wouldn’t. Equally, a song with a lot of brass would be brought down where one without would not.

So it’s taking a look at the frequency ranges a bit more than I was doing originally, and this seems to be providing a possible way forward. It’s getting teasingly close to working - still feel that it’s slightly off, but it’s really close. I’ll probably post another test result file tomorrow.

Cheers,

Jayson